Hi 👋, I'm Shawn!

Nice to meet you.

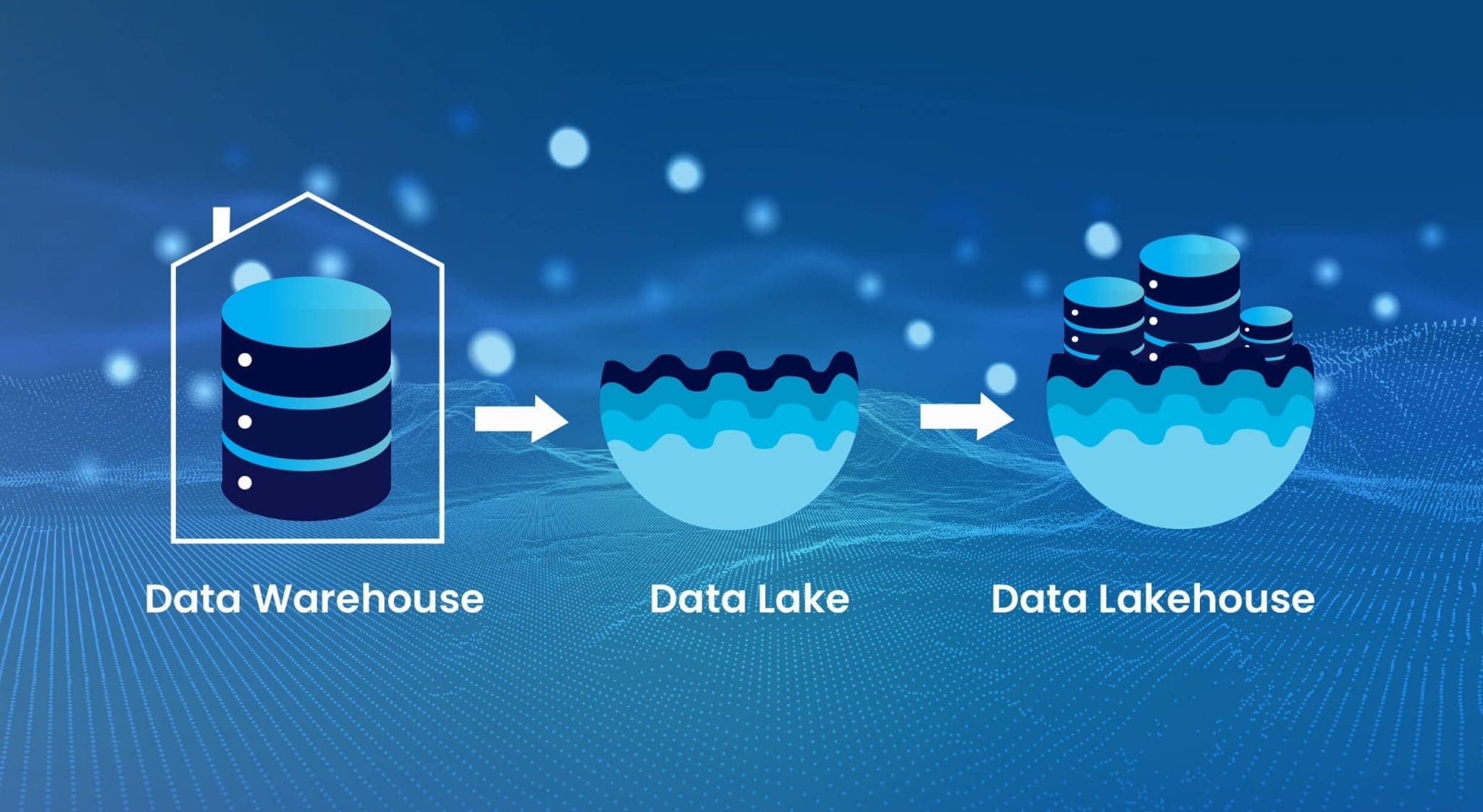

Senior Data Solution Architect with 11+ years’ experience designing and optimizing scalable data solutions. Expert in ETL pipelines, big data processing, and cloud architectures (Talend, NiFi, Airflow, Informatica) across AWS, Azure, and GCP. Skilled in data warehousing (Star, Snowflake, Data Vault) and big data tools (Hadoop, Spark, Kafka, HDFS) for real-time streaming. Strong in data governance, ensuring quality, metadata management, and compliance (HIPAA, GDPR). Experienced in deploying ML models (Scikit-learn, TensorFlow, PyTorch) via Databricks. Proficient in data visualization (Tableau, Power BI, QuickSight, Plotly) to deliver insights. Adept in DevOps practices with Docker, Kubernetes, and CI/CD pipelines for efficient delivery.

Featured

Little more about me!

Skills

A quick snapshot of my toolkit

Experience

Data Solution Architect

Technologies

Highlights

- Architected and deployed real-time data streaming infrastructures using Apache Kafka, Apache Flink, and AWS Kinesis, enabling 99.9% uptime for data pipelines and improving supply chain visibility and Operational responsiveness by 35%.

• Designed and implemented scalable, cloud-native data architectures across AWS, Azure, and GCP, integrating Amazon Redshift, GoogleBigQuery, Azure DataLake, Databricks Lakehouse, and Snowflake, leading to a 50% reduction in infrastructure costs and enhanced performance elasticity

• Led end to endmigration of on premise data warehouses to modern cloud ecosystems, leveraging Snowflake, Delta Lake, and Databricks, resulting in 60% improvement in query performance and 70% decrease in maintenance overhead.

Senior Data Engineer

Technologies

Highlights

- Designed and maintained cloud-scale ELT pipelines using Spark, Snowflake, and Airflow to support analytics and reporting at scale.

• Experience implementing data contracts and aligning with Data Mesh principles for decentralized ownership across distributed teams.

• Integrated Snowflake for cloud warehouse migrations, optimizing query performance and enabling real-time analytics dashboards.

Data Engineer

Technologies

Highlights

- Implemented data governance frameworks, including data lineage, access control, and regulatory compliance for healthcare datasets.

• Architected and implemented a data lake on Google Cloud Platform (GCP), enhancing data accessibility and crossfunctional analytics.

• Developed and automated data quality validation frameworks using Great Expectations, reducing data discrepancies by 40%.

Projects

Data Solution Architect

Designed and led the development of a real-time healthcare analytics platform integrating EHR and claims data using Apache Kafka, Apache Flink, and AWS Kinesis.

Enabled predictive insights for population health management and reduced data processing latency by 60%.

Deployed HIPAA-compliant data pipelines with Apache NiFi and Airflow on AWS, enhancing care quality and regulatory compliance.

Cloud Data Lakehouse Migration

Led the migration of legacy on-premises data infrastructure to a unified cloud-based lakehouse using Databricks and Delta Lake on Azure.

Streamlined ETL workflows using Apache Spark and Talend, improving data refresh rates by 70%.

Integrated machine learning models with MLflow to forecast energy demands, increasing predictive accuracy by 30%.

Financial Data Pipeline Modernization

Developed scalable ETL pipelines with Apache Beam, Python, and Google Cloud Dataflow, processing over 10 million financial records daily.

Designed a cloud-native data lake on GCP, enabling seamless access to structured and unstructured data for cross-team analytics.

Implemented automated data validation and quality checks using Great Expectations, reducing data inconsistencies by 40%.

ML Feature Store for Fraud Detection

Designed and deployed a centralized ML Feature Store using Databricks, MLflow, and Feast, enabling 3× faster model iterations. Reduced fraud detection false positives by 18% through real-time feature engineering.